https://cafe.daum.net/SmartRobot/RoVa/2408

| MODEL : centerpoint_pillars DATASET : 2025 자율주행 AI 챌린지 dataset (128ch+64ch) TEST CODE : 대회의 baseline code에서 val.py(2025)를 사용해서 확인 TEST DATASET : 2024 dataset에서 train dataset의 64ch data만 뽑아서 사용 |

centerpoint.yaml

CLASS_NAMES: ['Vehicle', 'Pedestrian', 'Cyclist']

DATA_CONFIG:

_BASE_CONFIG_: cfgs/dataset_configs/custom_pillars_dataset.yaml

POINT_CLOUD_RANGE: [-75.2, -75.2, -2.0, 75.2, 75.2, 4.0]

DATA_PROCESSOR:

- NAME: mask_points_and_boxes_outside_range

REMOVE_OUTSIDE_BOXES: True

- NAME: shuffle_points

SHUFFLE_ENABLED: {

'train': True,

'test': True

}

- NAME: transform_points_to_voxels

VOXEL_SIZE: [ 0.2, 0.2, 6.0 ]

MAX_POINTS_PER_VOXEL: 20

MAX_NUMBER_OF_VOXELS: {

'train': 250000, #150000

'test': 250000 # 150000

}

MODEL:

NAME: CenterPoint

VFE:

NAME: PillarVFE

WITH_DISTANCE: False

USE_ABSLOTE_XYZ: True

USE_NORM: True

NUM_FILTERS: [ 64, 64 ]

MAP_TO_BEV:

NAME: PointPillarScatter

NUM_BEV_FEATURES: 64

BACKBONE_2D:

NAME: BaseBEVBackbone

LAYER_NUMS: [ 3, 5, 5 ]

LAYER_STRIDES: [ 1, 2, 2 ]

NUM_FILTERS: [ 64, 128, 256 ]

UPSAMPLE_STRIDES: [ 1, 2, 4 ]

NUM_UPSAMPLE_FILTERS: [ 128, 128, 128 ]

DENSE_HEAD:

NAME: CenterHead

CLASS_AGNOSTIC: False

CLASS_NAMES_EACH_HEAD: [

['Vehicle', 'Pedestrian', 'Cyclist']

]

SHARED_CONV_CHANNEL: 64

USE_BIAS_BEFORE_NORM: True

NUM_HM_CONV: 2

SEPARATE_HEAD_CFG:

HEAD_ORDER: ['center', 'center_z', 'dim', 'rot']

HEAD_DICT: {

'center': {'out_channels': 2, 'num_conv': 2},

'center_z': {'out_channels': 1, 'num_conv': 2},

'dim': {'out_channels': 3, 'num_conv': 2},

'rot': {'out_channels': 2, 'num_conv': 2},

}

TARGET_ASSIGNER_CONFIG:

FEATURE_MAP_STRIDE: 1

NUM_MAX_OBJS: 500

GAUSSIAN_OVERLAP: 0.1

MIN_RADIUS: 2

LOSS_CONFIG:

LOSS_WEIGHTS: {

'cls_weight': 1.5, #클래스 불균형

'loc_weight': 2.0,

'code_weights': [1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0]

}

CLASS_WEIGHT : [1.5,2.5,2.0]

POST_PROCESSING:

SCORE_THRESH: 0.1

POST_CENTER_LIMIT_RANGE: [-75.2, -75.2, -2.0, 75.2, 75.2, 4.0]

MAX_OBJ_PER_SAMPLE: 500

NMS_CONFIG:

NMS_TYPE: nms_gpu

NMS_THRESH: 0.7

NMS_PRE_MAXSIZE: 4096

NMS_POST_MAXSIZE: 500

POST_PROCESSING:

RECALL_THRESH_LIST: [0.3, 0.5, 0.7]

EVAL_METRIC: waymo

OPTIMIZATION:

BATCH_SIZE_PER_GPU: 1

NUM_EPOCHS: 80

OPTIMIZER: adam_onecycle

LR: 0.003

WEIGHT_DECAY: 0.01

MOMENTUM: 0.9

MOMS: [0.95, 0.85]

PCT_START: 0.4

DIV_FACTOR: 10

DECAY_STEP_LIST: [35, 45]

LR_DECAY: 0.1

LR_CLIP: 0.0000001

LR_WARMUP: False

WARMUP_EPOCH: 1

GRAD_NORM_CLIP: 10

dataset.yaml

DATASET: 'CustomAvDataset'

DATA_PATH: '/mnt/c/data/custom_av'

POINT_CLOUD_RANGE: [-70.0, -70.0, -4.0, 70.0, 70.0, 4.0]

MAP_CLASS_TO_KITTI: {

'Vehicle': 'Car',

'Pedestrian': 'Pedestrian',

'Cyclist': 'Cyclist',

}

DATA_SPLIT: {

'train': train,

'test': val

}

INFO_PATH: {

'train': [custom_av_infos_train.pkl],

'test': [custom_av_infos_val.pkl],

}

POINT_FEATURE_ENCODING: {

encoding_type: absolute_coordinates_encoding,

used_feature_list: ['x', 'y', 'z'],

src_feature_list: ['x', 'y', 'z', 'intensity'],

}

DATA_AUGMENTOR:

DISABLE_AUG_LIST: ['placeholder']

AUG_CONFIG_LIST:

- NAME: gt_sampling

USE_ROAD_PLANE: False

DB_INFO_PATH:

- custom_av_dbinfos_train.pkl

PREPARE: {

filter_by_min_points: ['Vehicle:5', 'Pedestrian:5', 'Cyclist:5'],

}

SAMPLE_GROUPS: ['Vehicle:15', 'Pedestrian:10', 'Cyclist:10']

NUM_POINT_FEATURES: 4

DATABASE_WITH_FAKELIDAR: False

REMOVE_EXTRA_WIDTH: [0.0, 0.0, 0.0]

LIMIT_WHOLE_SCENE: True

- NAME: random_world_flip

ALONG_AXIS_LIST: ['x', 'y']

- NAME: random_world_rotation

WORLD_ROT_ANGLE: [-0.78539816, 0.78539816]

- NAME: random_world_scaling

WORLD_SCALE_RANGE: [0.95, 1.05]

DATA_PROCESSOR:

- NAME: mask_points_and_boxes_outside_range

REMOVE_OUTSIDE_BOXES: True

- NAME: shuffle_points

SHUFFLE_ENABLED: {

'train': True,

'test': True

}

- NAME: transform_points_to_voxels

VOXEL_SIZE: [0.1, 0.1, 0.15]

MAX_POINTS_PER_VOXEL: 5

MAX_NUMBER_OF_VOXELS: {

'train': 150000,

'test': 150000

}

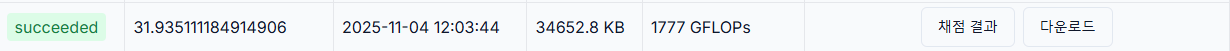

리더보드

| 리더보드 TEST | baseline | 개선방안 |

| VEHICLE_AP/L1 | 0.5973 | 0.4786 |

| VEHICLE_AP/L2 | 0.5687 | 0.4550 |

| PEDESTRIAN_AP/L1 | 0.3480 | 0.1900 |

| PEDESTRIAN_AP/L2 | 0.3372 | 0.1839 |

| CYCLIST_AP/L1 | 0.3448 | 0.3284 |

| CYCLIST_AP/L2 | 0.3351 | 0.3191 |

결론

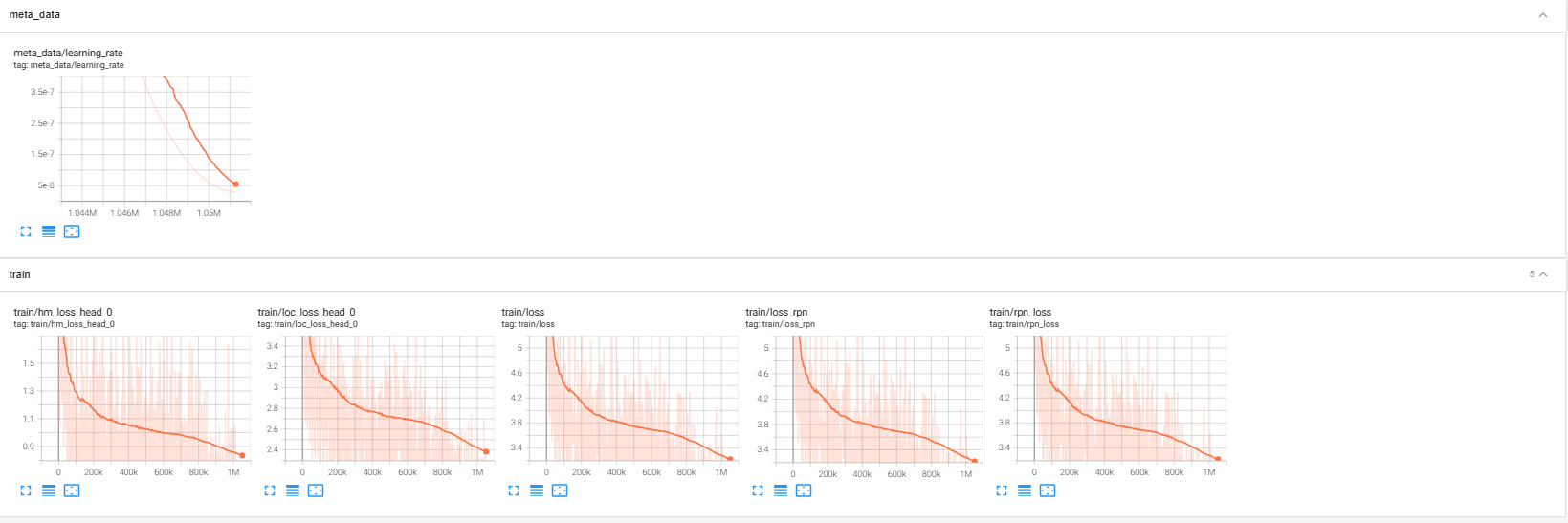

baseline에서 BATCH_SIZE_PER_GPU: 1인 상태에서 80epoch으로는 제공해준 프리트레인 모델과 같은 성능을 낼수 없을 것 같다.

또한 voxelsize를0.25에서 0.2로 줄였는데 centerpoint_pillar에서는 voxelsize를 줄이는방법이 추론시간도 올라간것을 보아

voxelsize를 1등이 했던 방법이 아닌것 같기도 하고 성능을 방법이 될수 없는것 같다.

졸업작품 영상

원시 포인트 클라우드

Voxel_RCNN

4분할 영상

이러한 형식으로 1분정도 영상을 만들었습니다.